AI in Production:

The 2026 Runtime

Execution Report

What AI actually runs.

Over the past year, AI adoption has been described as unprecedented in both speed and scale. To put that claim to the test, we analyzed what’s actually running in production environments today, revealing how the world’s largest companies are using AI and why runtime execution has become the clearest signal of AI risk.

Key Takeaways:

1. AI libraries are now as common as foundational data science tools, BUT

are often dormant

AI libraries have become as common in modern environments as core data science packages, making them part of the normal software stack rather than a rare exception. But in many cases, they sit dormant—installed, available, and often overlooked without being actively monitored. That gap matters, because even unused AI components can expand the attack surface and create hidden risk.

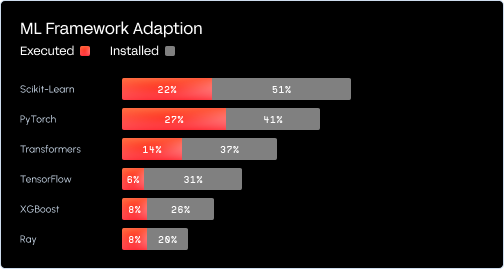

2. Execution rates are significantly lower than installation rates

Execution rates are far lower than installation rates, which means many AI libraries are present in environments without being actively used. That disconnect creates a false sense of relevance and can blur which components truly matter in production. For defenders, the lesson is clear: prioritize what actually runs, not just what happens to be installed.

3. Agentic behavior is emerging organically, rather than intentionally

Agentic behavior is beginning to emerge organically inside real environments, often as a byproduct of how AI tools, workflows, and integrations evolve over time. In many cases, organizations are not deliberately deploying “agents” as much as slowly accumulating agent-like behavior across systems. That makes visibility critical, because autonomous actions can appear before security teams fully realize they exist.

After reading this guide, you’ll be able to:

- See where AI is really showing up across your environment

- Understand the hidden risks quietly growing beneath the surface

- Learn what it takes to secure the next wave of AI-powered applications

- Understand the hidden risks quietly growing beneath the surface

- Learn what it takes to secure the next wave of AI-powered applications

Who is this for?

This report is for security leaders, practitioners, and technical teams who need to understand how AI is actually appearing inside their environments and what that means for risk, visibility, and control. It’s especially relevant for organizations building, adopting, or securing AI-powered applications.

Get the guide

Trusted By

.svg)