The Definitive Guide to Runtime Vulnerability Prioritization

When developers write code—essentially a set of instructions intended for a computer to read and interpret—there is a huge variation between which libraries and imports will be written in the code, and which parts of this code will ultimately run in production. (Read this post on the difference between loaded and executed libraries, for some reference).

This distinction is important, as it’s the basis of the chasm that has developed over the years between application security and developers. To date, the most popular scanners have scanned the entire codebase, and alerted to every single possible vulnerability in the written code––amounting to thousands upon thousands of CVEs detected after every single scan of the code, many of which are classified as “critical” or “high” severity.

A good example of this is if we take a sample program we need to ship with our code that we’ll call “ExampleJS” (not a real library). This library is leveraged in our code for the sole purpose of converting Fahrenheit to Celsius. But this very same library, as part of its capabilities, is able to convert basically anything––imperial units to the metric system, every single currency to another currency, or even fractions to decimals. When a chosen scanner runs, it will alert to vulnerabilities in many parts of this library’s code, when only the code that relates to Fahrenheit and Celsius conversion matters to our systems at all. This is the only part in the entire ExampleJS library converted to executable code, and all the rest basically goes unused at runtime.

Every single day, developers need to juggle diverse customer-facing and “engineering” priorities. Customer-facing demands are business-driven, such as fixing bugs or visual bugs or even shipping new and profitable features in products. Engineering is everything that we need to do on the backend to ensure our systems and products function properly. This includes anything from infrastructure operations and maintenance to security. There is always a fine balance between the developer’s load of product and engineering tasks at any given time.

Requesting an engineer’s time needs to be done with all this in mind, to ensure they prioritize security requests and take them seriously. If we take the ExampleJS library, this means that most of the vulnerabilities that will be found by the static scanner in this library and added to the AppSec dashboard for tracking and mitigation, will be for code that is unused. Therefore, the task of fixing the many CVEs in this library is not relevant, and a waste of valuable engineering time.

Runtime Prioritization for Practical & Context-Driven Security

Developers know that when they write code, that a lot of it doesn’t eventually execute or run in production. So when an AppSec engineer demands a fix for a critical vulnerability, but the developer knows this code can’t be technically executed, they won’t take the AppSec engineer seriously.

The only way to know for certain whether a vulnerability can be exploited in the actual code as it runs is to have a granular and real-time view of all libraries and functions that are used and unused, executed, and loaded, and know how to differentiate between them during runtime––and on top of this to, have the right level of business and cloud context to provide the full picture for true risk exposure.

Runtime Context

It’s not enough that a library is loaded to RAM. With all the functions our OSS libraries are capable of, it’s important to understand which of these functions are actually being executed when our program runs. Knowing a library is loaded does not give us sufficient visibility to whether code is being used during runtime.

We need to actually understand if the loaded library will actually be executed, and even if the library is executed (like ExampleJS), whether the specific vulnerable function will be executed during runtime.

Cloud migration, orchestration, and containerization have introduced many technical capabilities. Alongside emerging technology like eBPF, these capabilities make it possible to see the entire call stack, and everything that’s happening in the bits and bytes of code without impacting the code or performance. But sometimes, even runtime context is insufficient to qualify the severity of a vulnerability.

Business Context

Let's say we have 10 Kubernetes clusters, where each cluster has 100 namespaces. Each namespace is composed of pods, and then the containers that are run by those pods…the list goes on. This is true for each technology in our stack: each has its own layers and hierarchy, workload and use case, as well as production environments and demo environments.

What’s more, due to the nature of microservices and cloud-native architecture, there are higher-risk, business-critical services, and lower-risk, less-critical services. Everything eventually depends on the business context––a payment app will require greater security and pose more risk to a business than a page sorting app. That is why, even if a function that has a critical vulnerability is called at runtime, but in its business context it only applies to an internal demo environment or a low-priority service––this will impact remediation prioritization.

But is this enough context? Not yet.

Cloud Context

Now let’s dive even deeper. Let’s say we have a high severity vulnerability in a service with low business context. However: do we know if this service is connected to the internet or has access to sensitive application data? This will also impact how urgently we need to prioritize remediation. Data access and internet exposure are additional risks that need to be explored to assess the risk exposure. If there is a low-priority service, but this container can be reached from the public web, and it also has sufficient permissions to access sensitive data (such as PII), then this automatically elevates the risk.

Filtering the CVE Noise with Runtime Context

In the end, filtering the noise and focusing on the real risks to our organization is the ultimate goal when it comes to practical security, and a lot of Oligo’s research and development has been focused on simplifying this significantly.

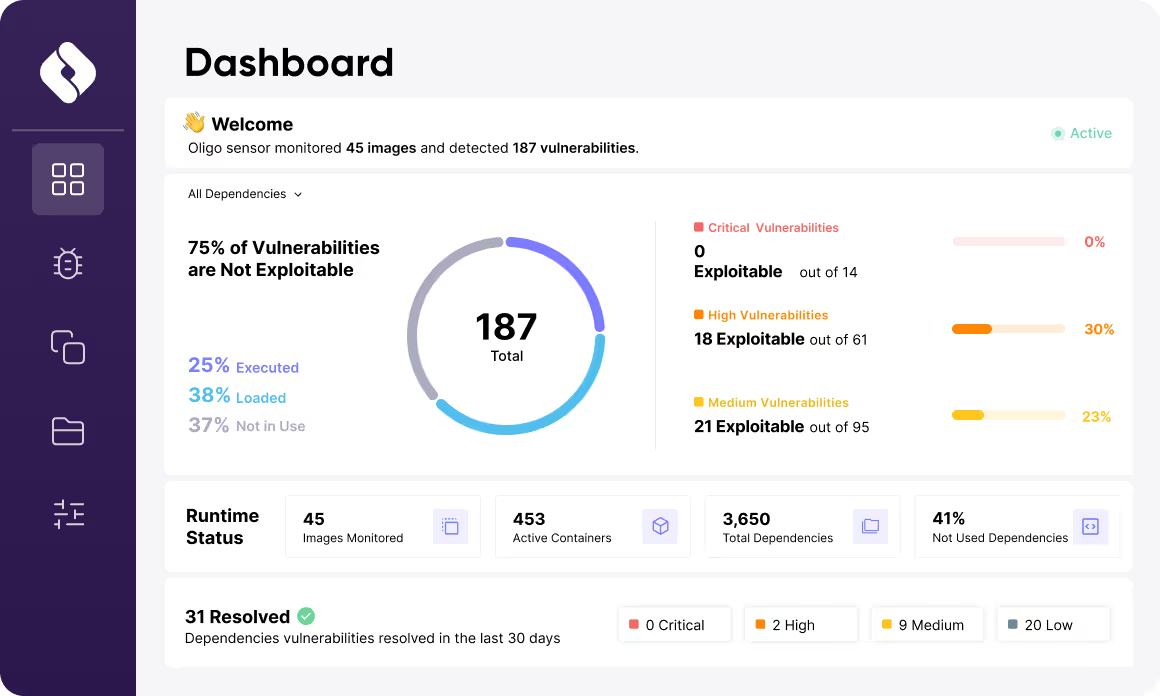

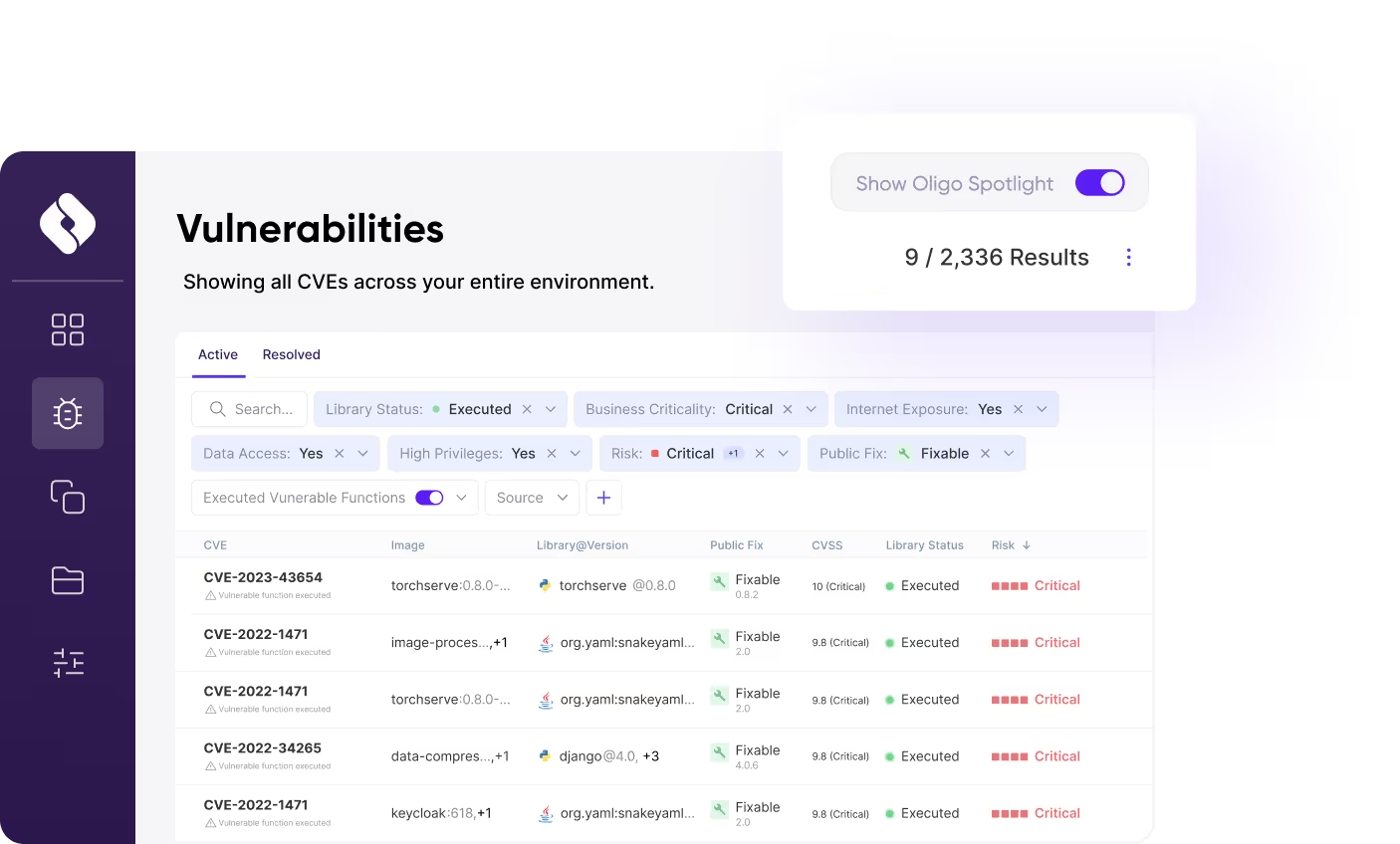

All OSS scanners output thousands of vulnerabilities to their respective dashboards with a diversity of severity classifications. However, if we start to toggle different parameters––executed libraries only, high business context, internet exposure, and data access––suddenly we have a completely different picture of vulnerability management and risk assessment, and a better strategy to manage and prioritize remediation.

If we take an example from the Oligo dashboard, what starts as thousands of vulnerabilities, through a few toggles, can be filtered down to tens or even a single digit number of vulnerabilities. There is even a magic wand that will select the most important toggles for you!

We can then filter them even further.

Ok, CVEs Filtered—Now What?

Looking at our dashboard, if a high severity vulnerability is found in an executed library with low business context, our first course of business is to warn developers not to use this library. We can take this one step further by creating an automated policy that prevents this library from being used in a business-critical context. However, that said, this vulnerability will not require critical remediation now––and we can reduce the cognitive load on the developer.

Then we look deeper to discover that while another high-severity vulnerability in our dashboard has a function that is executed during runtime, it has no internet exposure, which is the true definition of reachability and exploitability, and we know that this vulnerability does not need to be prioritized.

The real gold is knowing when we have a high severity vulnerability in a library that is loaded, running, and executed in the runtime context, is mission-critical in the business context, with internet exposure and data access in the cloud context. The epitome of reachability and exploitability. BINGO. We should start here.

Bridging the AppSec / Developer Divide with Context

When we as application security engineers come to our developers requesting an immediate security fix, they need to be able to take our high-priority requests seriously. This can only be achieved with sufficient data and context.

When we can pinpoint down to the runtime context, the function executed, within the business context the high value applications exposed, and in the cloud context the level of internet exposure and data access, we can filter the CVE noise down to the handful of vulnerabilities that pose a real threat to our organization. By doing so, we empower developers to take quick and decisive action that will lead to greater prioritization of AppSec requests in the future.

With everything in the world moving towards data-driven decisions, application security should not be left behind. There is truly powerful technology making it possible to get down to the bits and bytes of runtime security, with additional parameters that help us prioritize based on relevant business and exposure context. All this together brings back into focus the importance of proper threat assessment and prioritization, while at the same time reducing alert fatigue, cognitive load, and developer toil. This is true runtime security unlocked––and you can (and should) get started immediately.

.svg)