Oligo ADR in Action: LLM Prompt Injection

TL;DR

King of LLM vulnerabilities, prompt injection happens when bad actors craft LLM inputs that cause the model to behave in a way it wasn't designed to. That poor behavior can range widely: from generating low quality, biased, or unethical output all the way to actually executing malicious code. Good design can mitigate the threat, but as we show in this blog, even good developers can make mistakes which means runtime detection is critical for LLM applications. Oligo ADR detects prompt injection attacks no matter the origin or payload, making it an ideal companion for LLM applications.

Introduction: Prompt Injection Exploits

The OWASP Top 10 for LLMs lists prompt injection as its number one concern and for good reason. LLMs are often connected to a large number of important resources and infrastructure including databases holding sensitive information, an organization's networks, and other critical internal and external applications – with risks flowing in many directions.

While prompt injections that cause LLMs to give up some sensitive or unethical information can certainly cause damage, the ones that let an attacker execute code are the bigger threat.

In this blog we'll go over two specific examples of exploitable CVEs where prompt injection can lead to remote code execution (RCE) and show how Oligo ADR detects and stops these exploits. These exploits work because of the way the prompt is processed in Python through open source library code, meaning they are not vulnerabilities specific to any particular AI model and will work on a wide range of models.

One exploit occurs in the code for LangChain, the other in LlamaIndex. But Oligo ADR doesn't care where an exploit is occurring or whether the exploit is cataloged in a vulnerability database: LLM clients, web applications, backend services, third party apps – wherever there are attempts to exploit an open source library, Oligo ADR can detect them in real time and stop them in their tracks, no CVE required.

(And by the way, Oligo ADR detects far more malicious activity than prompt injections—that's just the example we're writing about today.)

How Does Oligo ADR Detect Exploitation of Prompt Injections?

Oligo holds and constantly updates a huge database of runtime profiles for a wide range of third-party libraries. The Oligo Platform uses these profiles to figure out when libraries are behaving strangely, which indicates an active exploit beginning or in progress.

Take, for instance, the prebuilt Oligo profiles for the LangChain and LlamaIndex libraries. These profiles have never indicated any instance of code execution invocation within the prompt processing flow as part of their legitimate intended behavior.

As a result, Oligo promptly flags any attempt to trigger prompt injection exploits, automatically generating an incident report in the Oligo Application Detection & Response platform.

Unlike other security products, Oligo doesn’t rely on the way the vulnerability was exploited or which code was remotely executed. Whether it’s a data theft, DoS, or shell opening on the server - Oligo will detect the abnormal behavior of the library from the moment it begins.

CVE-2023-36258 (9.8)

Meet LangChain

LangChain is an open-source library that helps developers quickly create applications that connect with LLMs.

It defines "chains," which are plugins, as well as "agents," which are designed to take user input, transmit it to an LLM, and then use the LLM's output to take additional actions.

Since the LLM output feeds into these plugins, and the LLM output originates from user input, attackers can use prompt injection techniques to manipulate the functionality of poorly designed plugins.

A critical security flaw identified as CVE-2023-36258 was discovered in LangChain@0.0.235 PALChain chain implementation. Due to the way it's implemented in LangChain@0.0.235, using prompt injection against the PALChain plugin can lead to RCE.

CVE-2023-36258 was fixed in LangChain v0.0.236. Also, releases starting with LangChain v0.0.247 and onward do not include the PALChain class, as it moved to the LangChain-Experimental package.

A deep dive into CVE-2023-36258

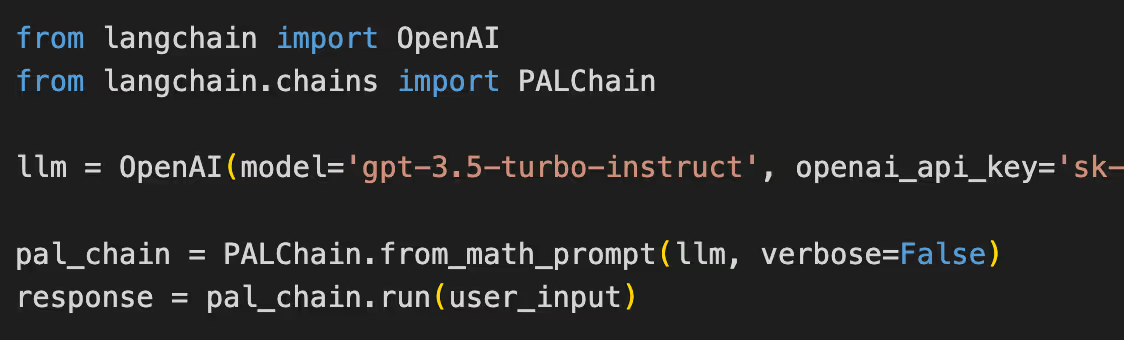

First, a typical vulnerable application will call the PALChain.run

Under the hood, the PALChain’s _call() function is called.

This function does two main things. First, it uses a generic LLMChain to understand the query we pass to it and get a prediction.

Second, it uses Python REPL to solve the function/program outputted by the LLM.

The Python REPL’s run() function, which is called, uses the built-in Python function - exec.

The exec function executes the function/program outputted by the LLM without any input validation. Therefore, a user can inject harmful content into the LLM, prompting it to generate desired Python code to be sent to evaluation, resulting in Remote Code Execution.

Exploitation and Detection of CVE-2023-36258 using Oligo ADR

In order to demonstrate the vulnerability exploitation, an attacker needs to provide the following prompt:

This exploitation demo was performed using the LangChain@0.0.235 version with the OpenAI gpt-3.5-turbo-instruct model as the base LLM - some modifications to the prompt will likely be required for other LLMs.

Example of remote code execution through prompt injection in the PALChain chain:

In the video above, you can see that when the Oligo sensor sees that the behavior of the LangChain library has been modified, it moves into action—immediately recognizing the behavior deviation and alerting the user.

While this exploit might have gone undetected for weeks or months in a typical application, Oligo ADR detects it instantly, so the attack stays limited and the security team can respond immediately.

CVE-2024-3098 (9.8)

Meet LlamaIndex

LlamaIndex is one of the leading data frameworks for building LLM applications. It provides the llama-index-core open-source library, which provides the core classes and building blocks for LLM applications in Python.

llama-index-core involves many types of query engines - interface that allows the user to ask questions over the data. PandasQueryEngine is one type of query engine that gets Pandas dataframe as input, and uses the LLM to analyze the data.

A critical security flaw identified as CVE-2023-39662 was discovered in llama-index up to version 0.7.13. It turned out that the PandasQueryEngine used the insecure eval function in the implementation, which could lead to remote code execution via prompt injection.

After this vulnerability was uncovered, the LlamaIndex developers changed the implementation and instead used the safe-eval function from the exec-utils class, which was intended to restrict the ability to execute arbitrary code through a prompt injection.

This implementation of the safe_eval function, however, was able to be bypassed, resulting in CVE-2024-3098 in llama-index-core.

A deep dive into CVE-2024-3098

While interacting with an LLM application that is based on the llama-index-core, a flow of function calls can lead to the evaluation of the user input.

A typical vulnerable application will call the query_engine.query vulnerable function with the prompt provided by the user.

Using a similar method to LangChain, we see that PandasQueryEngine.query function has two main roles. First, it calls llm.predict function to understand the query we pass to it and get a prediction. Second, it calls PandasInstructionParser.parse and passes it the prediction.

The PandasInstructionParser.parse function then calls the default_output_processo function, which in turn calls the safe_eval function with the LLM prediction as argument.

The funny (or sad) part here is that the LlamaIndex developers mentioned in a code comment that they were inspired by the same vulnerable methodology of langchain, mentioned in the last section, to evaluate their LLM prediction result.

The safe_eval function calls the eval with some restrictions, which were added in the fix of CVE-2023-39662 and were bypassed in CVE-2024-3098.

Exploitation and Detection using of CVE-2024-3098 using Oligo ADR

In order to exploit the vulnerability, the attacker needs to provide the following prompt:

This exploitation was performed using the llama-index-core@0.10.22 version.

Example of remote code execution via prompt injection in the llama-index-core PandasQueryEngine:

The video shows how the Oligo sensor immediately recognizes the exploitation of CVE-2024-3098 by comparing the behavior of the Llamaindex library during exploitation against its normal behavior.

Just as with the previous exploit, in this video you can see that as soon as the Llamaindex library's behavior changes, the Oligo sensor detects it and alerts the user. In the dashboard, the user can see further information on the attack including the exact timestamp for the process violation as well as the complete callstack.

Conclusion: Prompt Injection Protection

Prompt injection is a serious security risk that can lead to massive exploits, which is why OWASP considers it the biggest threat in AI.

Oligo Security has been at the forefront of keeping AI applications safe, using new techniques to detect and stop attacks on AI infrastructure faster than ever before.

This time, we’ve shown how Oligo ADR detects and protects organizations from prompt injection vulnerabilities, allowing unprecedented response speeds and stopping attacks from becoming major cyber loss events.

By identifying vulnerabilities (and not just from prompt injection) the moment they are exploited—even before they make headlines—the Oligo Platform helps you stay ahead of zero-day exploits.

The Oligo Platform takes just minutes to deploy. Get in touch with us to get started.

.svg)